AI dubbing is changing how we watch stuff from around the world. It breaks down language barriers fast and accurately. Picture this: you're watching a foreign film, and it feels like the characters are speaking in your language. That's what AI dubbing does. It's a new tech that's changing how we enjoy content globally. A study says the AI dubbing market is set to grow by 30% each year, which shows how quickly it's catching on in different fields. But what is AI dubbing, really, and how does it work? Here, we'll explore how AI dubbing works, its benefits, and its challenges. We'll also see how it stacks up against traditional dubbing and talk about companies like Deepbrain AI and AI Studios driving this change. Looking ahead, we'll touch on trends and the ethical questions that come with this tech. So, let's dig in and see how AI dubbing is changing the world of content!

AI Dubbing: Definition and Overview

Understanding AI Dubbing Technology

AI dubbing leverages artificial intelligence to replace original video voices with new ones in different languages. It's revolutionizing video content localization by making the process quicker, more affordable, and accessible. This technology automates translation and voiceover through:

- Machine learning

- Generative algorithms

- Text-to-speech (TTS)

- Voice cloning

The outcome? Natural-sounding dubbed audio that aligns perfectly with lip movements. A standout feature of AI dubbing is its ability to preserve the original speaker’s tone and emotion, ensuring the dubbed experience feels as authentic as the original. The process involves:

- Transcribing the original audio

- Translating it

- Synthesizing a new voice

- Syncing it all to match lip movements and emotions

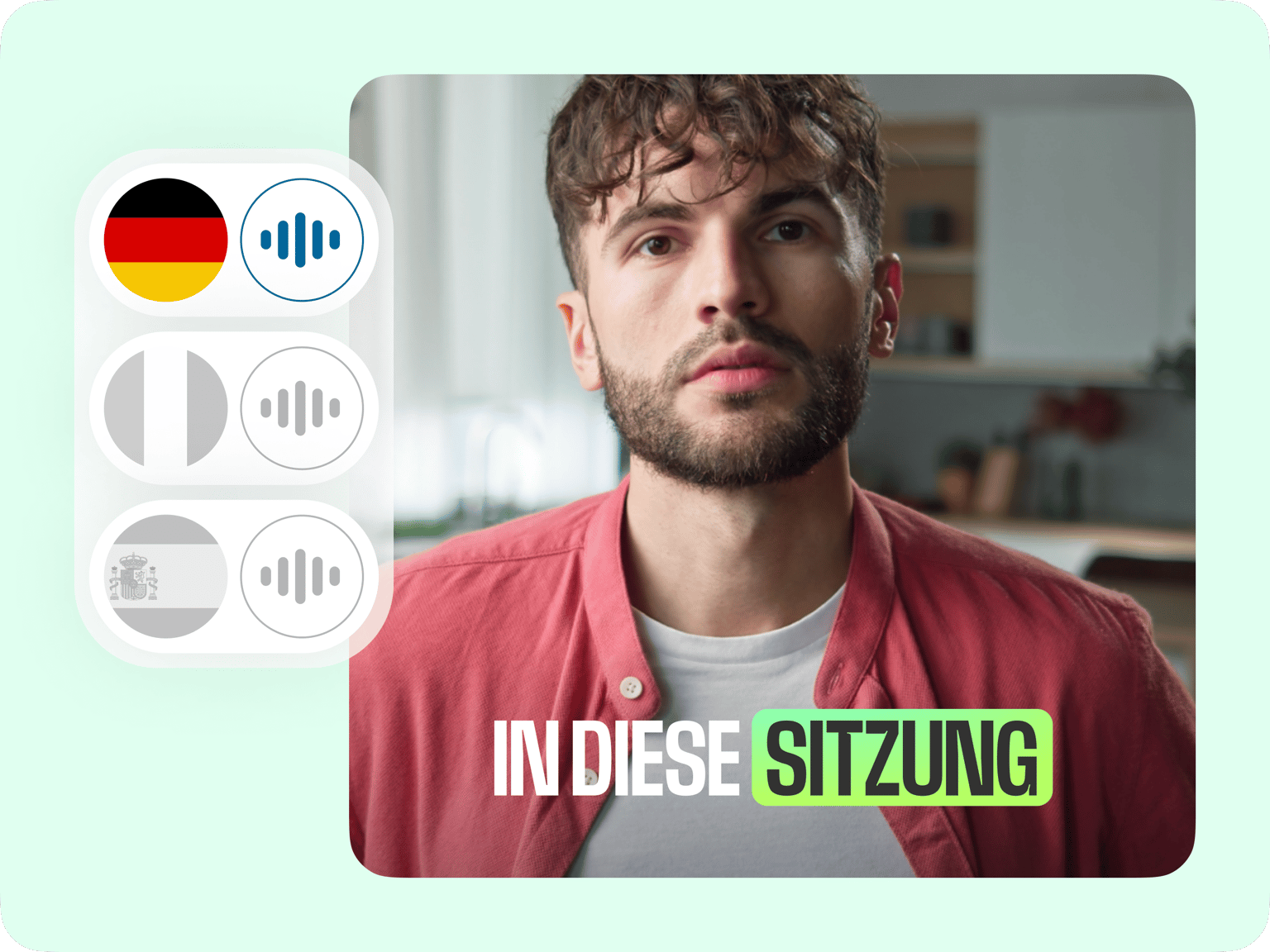

AI dubbing can handle vast amounts of content in numerous languages, making it ideal for large or ongoing projects. Its growing use spans industries such as film, TV, e-learning, podcasting, and social media, enabling global audience reach. Picture a streaming platform using AI to instantly translate and dub a TV series into multiple languages, with voices that mirror the actors' original tone and lip movements. This accelerates global distribution.

Key Technologies in AI Dubbing

AI dubbing integrates several technologies to deliver a seamless multilingual experience.

Role of Speech Recognition in AI Dubbing

Speech recognition is fundamental in AI dubbing, converting spoken words into text by analyzing audio to produce an accurate transcript, the first step in translation. Advanced algorithms can handle various accents and dialects, ensuring precise transcription.

Importance of Natural Language Processing (NLP) in Dubbing

Natural language processing is vital for translating while preserving the original message and emotion. It involves:

- Breaking down syntax

- Analyzing meaning

- Detecting sentiment

Speech Synthesis for Realistic AI Dubbing

Following translation, speech synthesis generates the dubbed audio. It creates synthetic voices that replicate human speech patterns, intonation, and emotion. Modern TTS systems, often enhanced with voice cloning, produce voices remarkably similar to real actors.

By integrating these technologies, AI dubbing offers a novel approach to content localization, making it accessible to a global audience with impressive efficiency and accuracy.

AI Dubbing Workflow Process

AI-Powered Transcription and Translation

The first step in AI dubbing is transcription. Here, AI-powered automatic speech recognition (ASR) converts spoken content into text, which then serves as the script for translation and dubbing. However, ASR isn't flawless. In noisy environments or with multiple speakers, it can result in a Word Error Rate (WER) of around 7.5%. This necessitates human intervention to double-check and ensure accuracy.

Following transcription, the text undergoes translation from one language to another using machine translation tools. Termbases and translation memories are employed to maintain accuracy and cultural relevance. Human linguists then refine the translation, ensuring it sounds natural and resonates with the target audience. For instance, a video in British English might be transcribed, translated into Spanish, and then polished by linguists to capture cultural nuances and idioms effectively.

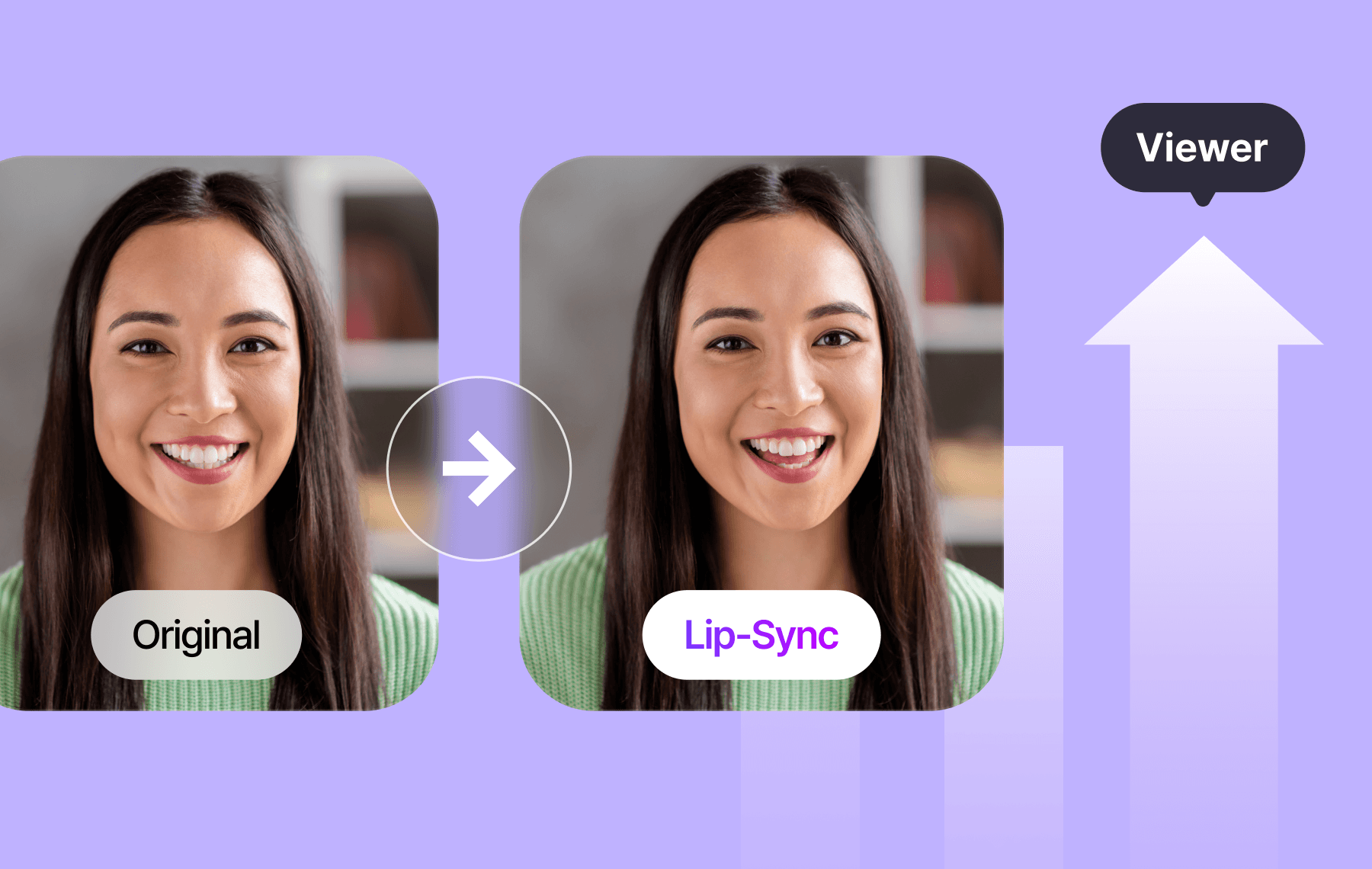

Advanced AI Voice Synthesis and Lip-Syncing

After translation, AI voice synthesis takes over. It generates a natural-sounding voice in the target language using text-to-speech (TTS) and voice cloning technologies. These tools aim to mimic the original speaker's tone and emotion. Some AI dubbing solutions incorporate emotional Text-to-Speech (eTTS) and Speech-to-Speech (STS) to enhance expressiveness.

Syncing the voice with lip movements is crucial for a natural appearance. AI employs audio synchronization to align dialogue with lip movements, ensuring both look and sound authentic. Technologies like Cross-Lingual Prosody Transfer (XLPT) help preserve the emotional and tonal essence of the original speech across languages. For example, Deepdub combines speech-to-speech technology with voice actor recordings to produce high-quality, emotionally rich dubbing.

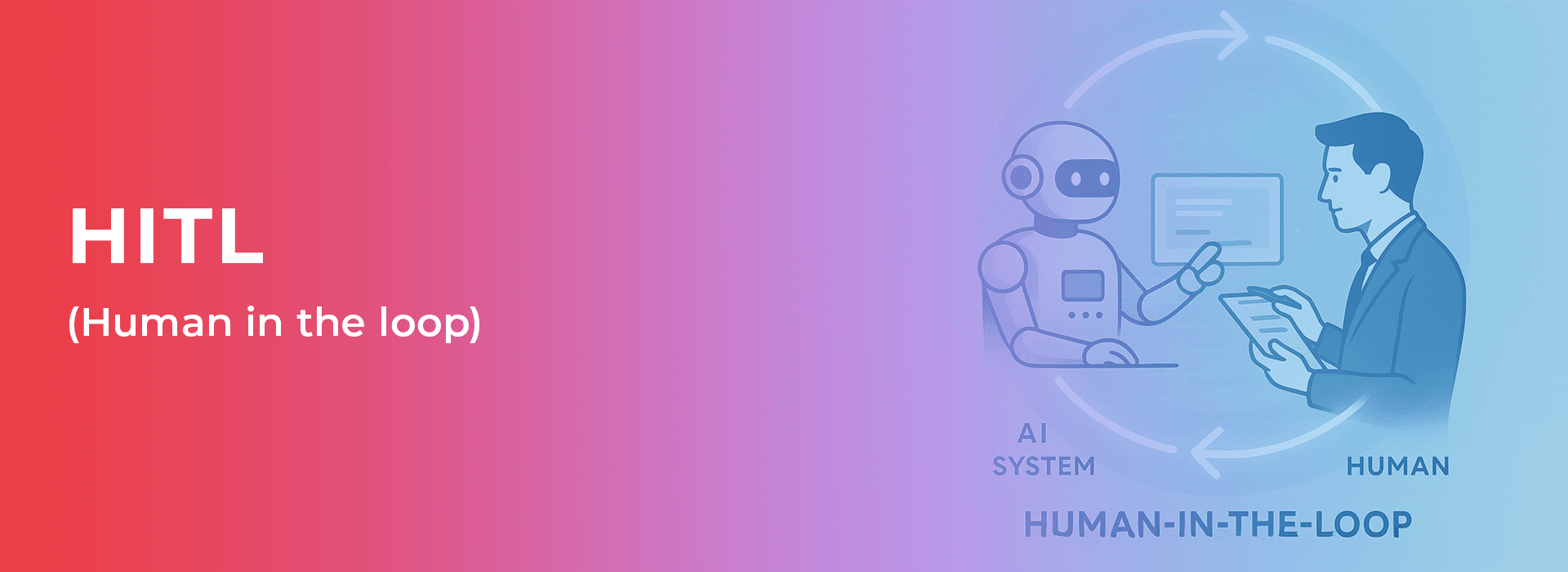

Human-in-the-Loop for AI Dubbing Quality Assurance

Despite advances in AI, the human touch remains essential. Human-in-the-loop (HITL) involves expert linguists and dubbing professionals reviewing and refining AI-generated outputs. They correct ASR and translation errors, ensuring the final product meets high standards.

Some AI dubbing services involve human directors and voice actors to guide AI voice creation. This synergy of human creativity and AI efficiency results in dubbing that resonates with audiences. For instance, 3Play Media utilizes HITL, where AI performs the initial work, and humans refine it, ensuring a natural, high-quality outcome. This collaboration between AI and human expertise is critical for producing dubbed content that is both technically sound and emotionally engaging.

Benefits and Challenges of AI Dubbing

Key Advantages of AI Dubbing Technology

AI dubbing is revolutionizing the media and entertainment industry. Here are some significant advantages:

- Speed: The dubbing process, which traditionally took months, can now be completed in hours or days. This rapid turnaround allows creators to stay ahead of trends and meet consumer demands. source

Cost-Effectiveness: By eliminating the need for voice actors, studio time, and extensive post-production, AI dubbing reduces costs significantly, making it ideal for large-scale projects. source

Multilingual Content Creation: AI enables the simultaneous creation of content in multiple languages, reaching a broader global audience. source

Consistent Voice Quality: Ensuring uniformity in voice quality is crucial for franchises and brands. AI dubbing excels in maintaining this consistency. source

Personalization: AI can customize voices to adapt to regional accents or user preferences, enhancing engagement. source

Workflow Integration: AI dubbing integrates smoothly into existing workflows, offering features like accurate lip-sync, tone adjustments, and background noise reduction. source

Barrier-Free Communication: By breaking language barriers, AI dubbing makes content accessible to non-English speakers or those preferring localized material. source

Continuous Operation: AI technology operates around the clock, enabling ongoing production without the need for additional personnel. source

Automated Syncing: It can automatically sync dubbed audio with video, saving both time and effort. source

Challenges Facing AI Dubbing Solutions

Despite its advantages, AI dubbing faces several challenges:

Emotional Depth: AI often struggles to capture the emotional nuances required for scenes demanding warmth or tension, resulting in flat-sounding voices. source

Complex Scenes: Scenes with multiple speakers require precise timing and emotional interplay, which can be difficult for AI to replicate accurately. source

Error Introduction: Automated speech recognition and machine translation can introduce errors, necessitating human oversight for accuracy. source

Cultural Nuances: AI may struggle with cultural nuances, such as jokes or idioms, which require a deep understanding of context. source

Lip-Syncing Challenges: Ensuring accurate lip-syncing is particularly challenging in action scenes or when dealing with languages that have different structures. source

Data Privacy and Security: The processing of voice data by AI raises concerns about data privacy and potential misuse. source

Ethical Considerations: Questions about the rights of voice actors and the potential misuse of synthetic voices are emerging as ethical concerns. source

Language Support: AI tools may not support all languages or handle tonal variations well, impacting quality and reach. source

Need for Human Oversight: To ensure cultural sensitivity, emotional authenticity, and correct terminology usage, human oversight remains essential. source

AI Dubbing vs. Traditional Dubbing

AI Dubbing and Traditional Dubbing: A Comparative Analysis

AI dubbing and traditional dubbing both aim to make audio-visual content accessible across different languages and regions, but they achieve this through distinct methods. AI dubbing is often more cost-effective and scalable than traditional methods. It reduces the need for professional voice actors and studio time, offering a fast solution capable of handling large volumes of content in multiple languages simultaneously.

However, traditional dubbing excels in providing emotional depth, subtlety, and cultural sensitivity—areas where AI can sometimes fall short. AI-generated voices may occasionally sound robotic or unnatural, particularly when dealing with complex elements such as technical terms, accents, idioms, and cultural jokes.

While AI can maintain voice consistency across various projects and languages, human oversight remains crucial to ensure quality and cultural accuracy. Additionally, AI dubbing raises privacy and ethical concerns, such as voice cloning and the misuse of personal data.

In summary:

- AI Dubbing: Ideal for tight budgets or deadlines.

- Traditional Dubbing: Preferred for content requiring strong emotional impact and cultural insight.

AI Dubbing Use Cases and Industry Impact

AI dubbing is gaining traction in industries like education, marketing, and news due to its ability to localize content rapidly and reach a global audience. Content creators leverage AI dubbing to explore new markets, monitoring metrics such as views and subscriber growth from dubbed versions.

In the film industry, AI dubbing is transforming localization processes by making them faster and more cost-effective, while also offering personalized viewer experiences. A hybrid approach, combining AI for initial translation and human input for refinement, is becoming increasingly popular, balancing efficiency with quality.

AI dubbing enhances content accessibility for individuals who prefer listening over reading subtitles or who find text translations challenging. However, as AI dubbing expands, it may impact traditional voice actors, potentially leading to job shifts or an increased focus on training AI systems.

- Educational Platforms: Use AI dubbing to swiftly adapt content for international students.

- Film Studios: Adopt hybrid models to ensure both quality and efficiency in localization efforts.

Deepbrain AI and AI Studios

🌟 Deepbrain AI Overview

Deepbrain AI specializes in synthetic media and AI-driven human simulations. By leveraging cutting-edge AI, they simplify and enhance video creation. Their innovative tools, such as AI avatars and text-to-video features, have notably impacted industries, particularly Japan's broadcasting sector with AI news anchors. Deepbrain AI is committed to making their technology accessible and valuable for diverse businesses, focusing on innovation, user-friendliness, and effectiveness.

Innovative AI Video Products

Deepbrain AI offers a suite of products that showcase their expertise, particularly in AI video creation and dubbing technology. These tools are designed to transform how we create and consume audio and video content.

AI Dubbing Technology

A standout feature is AI dubbing, which allows videos to be translated into multiple languages while maintaining natural lip movements. This ensures that the dubbed content retains the original tone and emotion, making it a game-changer for creators aiming to reach global audiences without compromising the original vibe.

AI Studios Platform

AI Studios by Deepbrain AI is a platform that converts text into fully animated videos with hyper-realistic AI avatars. Key features include:

- Customization: Personalize avatars and utilize AI voices.

- Translation: Translate videos seamlessly for global reach.

- User-Friendly Interface: Enhance and refine content with ease.

AI Studios integrates various AI tools, including dubbing, to ensure superior production quality, setting new standards in digital content creation and distribution.

Through these products and innovations, Deepbrain AI demonstrates the true potential of AI in digital content creation.

Future Trends in AI Dubbing and Ethical Considerations

Emerging AI Dubbing Technologies

AI dubbing is advancing rapidly, driven by significant advancements in voice synthesis technology. These innovations have resulted in voices that sound remarkably authentic and can be customized by gender, accent, and emotion. This progress helps to overcome the "uncanny valley" effect, making AI dubbing increasingly popular in sectors such as e-learning, video games, and accessibility services. The demand for scalable and efficient dubbing solutions is evident.

A key trend is the integration of voice synthesis with translation. This development allows a speaker's unique vocal characteristics, including pitch and emotions, to be preserved across different languages. By 2025, AI models are projected to achieve an 85% accuracy rate in translating idioms and emotions. Additionally, AI systems capable of handling speech-to-text, speech-to-speech, and text-to-text translations are expected to be incorporated into 35% of AI speech translation tools by that time.

By 2028, real-time AI dubbing with minimal delay is anticipated to become standard, particularly for live streams. This will coincide with highly personalized voice cloning that aligns with user-specific details like accent, tone, and age. AI dubbing tools are also improving in automated lip-syncing, voice matching, and integration with cloud platforms to enhance dubbing speed, quality, and accessibility in media, gaming, and training. Platforms like YouTube are expanding AI auto-dubbing capabilities for all creators, enabling them to reach a global audience by translating and dubbing videos into multiple languages.

Ethical Challenges in AI Dubbing Evolution

The rapid advancement of AI dubbing technology raises several ethical concerns, including the potential misuse of deepfakes, voice identity theft, and unauthorized use of voice data. These issues can lead to misinformation, fraud, and privacy violations. Data privacy is crucial, as AI dubbing requires extensive voice data, and improper handling can infringe on voice actors' rights, resulting in legal challenges. To address these challenges, transparency, consent, and security are essential.

AI dubbing also poses job-related concerns, as it might displace traditional voice actors and translators. However, AI is generally viewed as an enhancement to human creativity and emotion, rather than a replacement. Leading AI dubbing companies prioritize ethical practices by collaborating with human language experts, cultural consultants, and voice actors to maintain the artistic and cultural integrity of dubbed content. For instance, Deepdub employs a team of human experts alongside AI to ensure that dubbed content respects the original tone and context.

Despite these safety concerns, AI dubbing is expanding content accessibility by overcoming language barriers and enhancing accessibility in education, healthcare, and corporate training with personalized and multilingual content. Industry trends emphasize a human-AI partnership, where AI accelerates dubbing processes, but humans retain control over final creative decisions, cultural insights, and emotional elements. Addressing ethical issues necessitates robust guidelines and technical safeguards, such as secure protocols, multi-step dubbing processes, and adaptable voice engines, to ensure secure, culturally sensitive, and high-quality dubbed content.

AI Dubbing: Commonly Asked Questions

AI Dubbing vs. Traditional Dubbing: Handling Emotional Nuances

AI dubbing leverages advanced technologies like Cross-Lingual Prosody Transfer (XLPT) and emotional Text-to-Speech (eTTS) to preserve the emotional tone and rhythm of the original speech when dubbing into different languages. These tools ensure that the dubbed version resonates with the same emotional intensity.

In contrast, traditional dubbing relies on skilled voice actors and directors to convey emotions through pitch, pace, and intonation. While AI is rapidly improving in detecting these emotional cues, it still falls short of the full expressiveness offered by human actors. To bridge this gap, some approaches combine AI with human input. For example, Deepdub's hybrid method involves voice actors guiding AI to create richly emotional synthetic voices for authentic dubbing.

Challenges of Implementing AI Dubbing for Global Content

AI dubbing faces significant challenges in capturing the complete emotional range of human voice actors, necessitating ongoing advancements in machine learning and human-AI collaboration. Achieving accurate translations and lip-syncing across various languages is complex but essential for a seamless viewing experience.

Additional hurdles include managing licenses, addressing ethical concerns, and accommodating diverse voices and accents to meet global audience expectations. AI dubbing must process vast amounts of content swiftly without compromising quality, demanding robust infrastructure and sophisticated AI models. A major challenge is synchronizing AI voices with lip movements for multiple speakers in a video. AI Studios addresses this by offering lip-syncing for over 10 speakers per video, with options for regional accents.

Ensuring Accuracy and Cultural Relevance in AI Dubbing Translations

AI dubbing employs natural language processing and large language models to achieve precise script translations. Human intervention often refines these translations, ensuring cultural relevance and contextual appropriateness. Professional translators and dubbing directors adapt scripts so idioms, humor, and cultural references resonate with the target audience.

Advanced AI platforms allow customization of voice traits, accents, and dialects to align with regional preferences, enhancing authenticity. Feedback loops from human editors maintain the accuracy and cultural sensitivity of translations. Platforms like AI Studios offer regional accent choices and support for over 30 languages, facilitating voiceovers that meet local expectations. Despite these advancements, achieving cultural relevance remains challenging, making human oversight crucial to maintaining high standards for global content.

For more information, visit AI dubbing, Deepdub, and AI Studios.

| Aspect | AI Dubbing | Traditional Dubbing |

|---|---|---|

| Cost | More cost-effective, reduces need for professional voice actors | Higher cost due to professional voice actors and studio time |

| Speed | Faster, can handle large volumes of content quickly | Slower, time-consuming process |

| Emotional Depth | May lack emotional nuances | Provides strong emotional depth and subtlety |

| Cultural Sensitivity | Can struggle with cultural nuances, requires human oversight | High cultural sensitivity with experienced actors and directors |

| Voice Consistency | Maintains consistent voice quality across projects | May vary depending on actors |

| Privacy and Ethics | Raises concerns about data privacy and voice cloning | Fewer privacy issues, traditional methods |

| Scalability | Highly scalable, supports multiple languages simultaneously | Less scalable, requires more resources |

| Human Oversight | Requires human input for quality and cultural accuracy | Relies heavily on human expertise |

| Use Cases | Ideal for tight budgets, rapid localization | Preferred for high-quality emotional and cultural content |